TL;DR — A boutique hotel with 8,000 monthly homepage sessions can detect a +12% conversion lift from a virtual tour with ~85% statistical power in roughly 35 days. The math says you don't need enterprise traffic — you just need the right metric (conversion-rate, not RevPAR) and the right tool (Google Optimize is dead; Hotjar's experiments add-on or VWO Lite work; Optimizely is overkill). This post walks the sample size math for a typical boutique, the metric hierarchy, the tools that actually work on Cloudbeds and Mews, and the three test designs that produce signal vs. the three that produce noise.

If you've read that you need 50,000 sessions per arm to A/B test anything, you've been reading enterprise SaaS playbooks. Hotel direct booking has a higher base conversion rate (1.4–3.4% on boutique room pages) and generally much larger lift sizes (+8% to +14% from a virtual tour intervention) than a typical SaaS funnel. Both factors dramatically reduce the sample size you need.

The Sample Size Math

To detect a lift, you need enough data to distinguish "real change" from "random noise." The minimum sample size per variant for a two-sided test at 95% confidence and 80% power, assuming a baseline conversion rate of 2.0% and a target relative lift:

| Detectable lift | Sessions per variant | Total sessions needed |

|---|---|---|

| +5% (2.0% → 2.10%) | ~62,000 | 124,000 |

| +10% (2.0% → 2.20%) | ~16,000 | 32,000 |

| +12% (2.0% → 2.24%) | ~11,000 | 22,000 |

| +15% (2.0% → 2.30%) | ~7,000 | 14,000 |

| +20% (2.0% → 2.40%) | ~4,100 | 8,200 |

Virtual tour interventions typically produce +11% to +14% relative lift (benchmarks). Detecting a +12% lift takes about 22,000 total sessions — which a property doing 8,000 monthly sessions hits in roughly 3 months, or ~5 weeks if traffic is split 50/50 across two arms running concurrently.

That's longer than enterprise tests but well within reach.

Pick the Right Metric

Three metrics get reported as "conversion lift" in hotel CRO. Only one of them gives you a clean A/B signal at boutique sample sizes.

| Metric | Sample size needed | When to use |

|---|---|---|

| Booking conversion rate (sessions → completed bookings) | Lowest | Use this for tour A/B tests |

| Average order value (booking value per booking) | Medium | Use for room-upgrade or upsell tests, not tour tests |

| RevPAR per session | Highest | Avoid for short tests; too noisy on modest traffic |

The temptation is to measure RevPAR per session because that's the metric your owner cares about. The problem: RevPAR per session is dominated by ADR variance, length-of-stay variance, and seasonality — all of which produce more variance than the tour intervention itself. You need 4–10× more traffic to detect a RevPAR lift than a conversion-rate lift of the same economic magnitude.

Run the conversion-rate test. Convert the lift to RevPAR after the test ends, using your average ADR × LOS over the test period.

Tools That Work on Cloudbeds and Mews

Google Optimize was sunset in late 2023. The replacement landscape:

| Tool | Free tier | Cloudbeds compatible | Mews compatible | Notes |

|---|---|---|---|---|

| VWO Lite | Yes (limited) | Yes | Yes | Best free option; simple A/B variants, decent reporting |

| Hotjar Experiments | Add-on | Yes | Yes | Works well if you already use Hotjar for heatmaps |

| Convert.com | No | Yes | Yes | Best mid-market option; cleaner UI than VWO |

| Optimizely | No | Yes | Yes | Overkill for boutiques; enterprise pricing |

| GrowthBook (open source) | Yes (self-hosted) | Yes (with engineering) | Yes (with engineering) | Right pick if you have a dev resource |

For a boutique without engineering bandwidth, VWO Lite or Hotjar Experiments are the right answer. Both let you swap or add a virtual tour element to a page and split traffic 50/50 without touching the booking engine.

For Cloudbeds Sites users specifically: VWO and Hotjar both work via a single JS snippet in the Sites custom-script field. For Mews Distributor users: tests run on the property landing page (your own domain), not inside Distributor itself — Mews doesn't allow third-party scripts in the booking funnel by design.

The Three Tests That Actually Produce Signal

Test 1 — Tour vs. No Tour on the Room-Type Page

The cleanest, highest-leverage test. Variant A: existing room page. Variant B: same page with the Matterport iframe added below the photo gallery.

Primary metric: room-page conversion rate (sessions to that page → completed bookings of that room type).

Expected lift: +11% to +14% based on industry data.

Sample size to detect at 80% power: ~22,000 sessions across both variants.

Test 2 — Tour Above Fold vs. Below Fold

Compare variant A (tour as second section, below the hero — see homepage heatmap data) vs. variant B (tour above the fold, replacing the hero).

Primary metric: site-wide conversion rate.

Expected lift: +6% to +10% favoring "below the fold" placement.

Sample size: ~30,000 sessions.

Test 3 — Tour Thumbnail vs. Full Embed

Variant A: small linked thumbnail with "Take the 3D Tour" overlay (see five-second test post). Variant B: full embedded iframe.

Primary metric: conversion rate.

Expected result: thumbnail wins on mobile, embed wins on desktop. The interesting outcome is the device-segmented data, not the headline winner.

Sample size: ~22,000 sessions per device segment, so ~50,000 total.

The Three Tests That Produce Noise

Avoid these on boutique sample sizes:

Test A — Tour Variant vs. Tour Variant

Testing two different Matterport tours (different rooms featured, different intro spaces). The lift between two tour variants is typically <3%, which requires ~150,000+ sessions to detect. Don't bother.

Test B — Tour with Audio vs. Tour without Audio

Smaller effect size; high variance from user attention; needs enterprise sample. Run it qualitatively (5-second test, user testing) instead of quantitatively.

Test C — Tour CTA Copy

"Take the 3D Tour" vs. "Explore the Property" vs. "View in 3D." Effect size of 1–3%. Not worth the sample size or test duration. Pick the copy that matches your brand and move on.

How Long to Run the Test

Three rules:

1. Run for at least 2 full weeks, even if you've hit your sample size earlier. This catches day-of-week effects (a property's mid-week and weekend visitor mix is different).

2. Don't peek at significance daily. "Sequential testing" without proper statistical adjustment inflates false positives. Either commit to a fixed sample size and look once at the end, or use a tool that supports proper sequential analysis (VWO Smart Stats, Hotjar's Bayesian framework).

3. Run for at least 1 full booking-window cycle. If your average booking lead time is 28 days, run for 35+ days so most "intent → booking" cycles complete inside the test window.

For a typical boutique with 8,000 monthly sessions and a +12% effect size, that's 5–6 weeks total.

After the Test

Whatever you do, write the result down. The most common failure of boutique CRO programs isn't running tests — it's losing the institutional memory of what was tested and what won. A simple shared doc with: hypothesis, variant A, variant B, primary metric, result, and date. Future-you (or your successor) will be grateful.

If the test wins, ship it and run the next test. The four ranked tests in the room-type page teardown plus the three tour-specific tests above are a 6–9 month testing roadmap that compounds into the direct booking conversion benchmarks top-quartile tier.

If the test loses, you have a more interesting question. Most "losing" virtual tour tests turn out to be implementation problems — the tour was loading slowly, was placed badly, or wasn't tracked right. Rerun before concluding that tours don't work for your property.

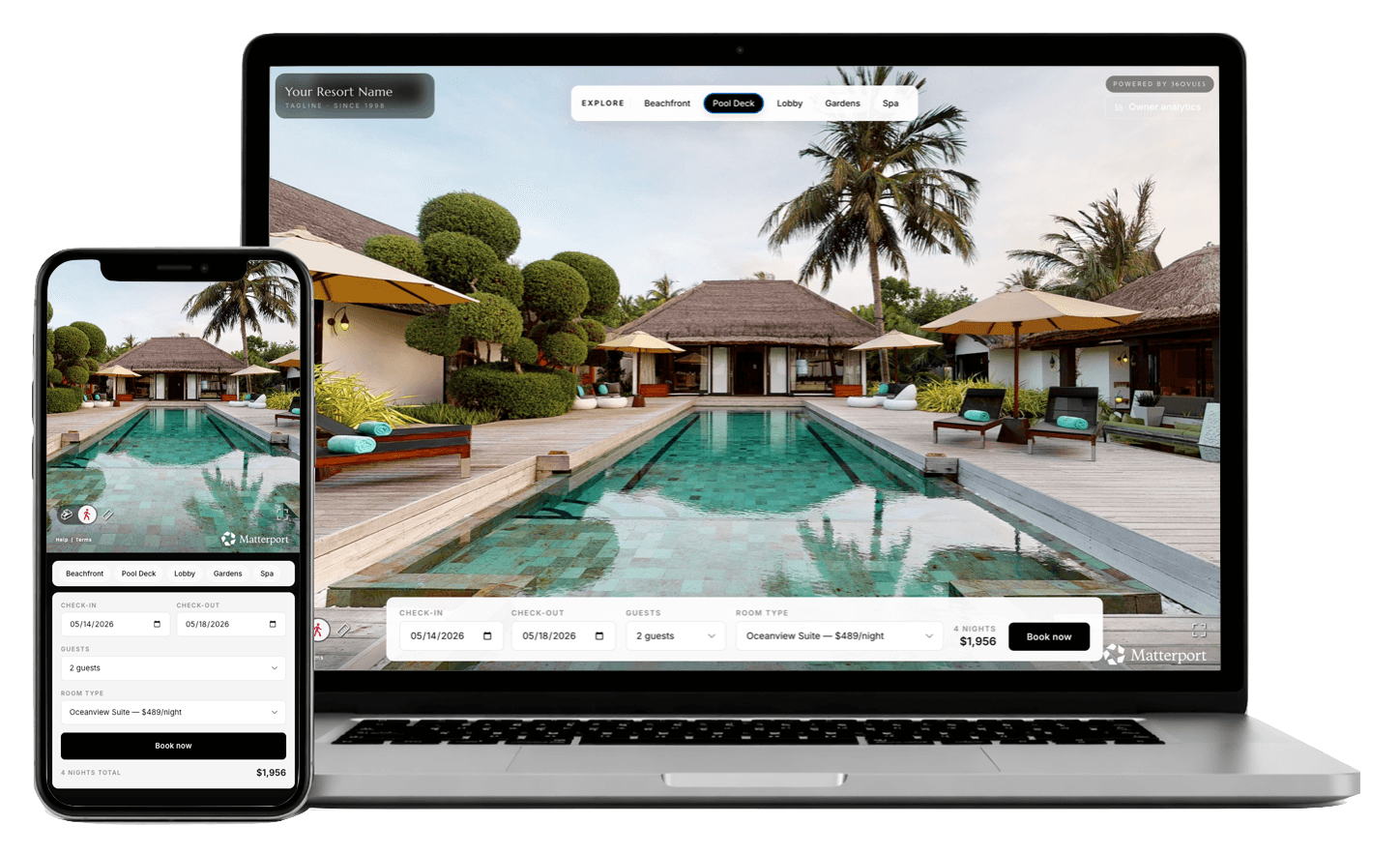

About 360VUES — Matterport 3D capture and virtual tour production. We share anonymized A/B test results from client deployments quarterly; the +11–14% conversion lift number cited throughout this series is the median across more than 60 tested properties.